The Cubic and Polymorphic Encryption Systems

For the past few years I’ve been wondering why encryption systems, even though were already considered as stable on the time they were released or known to the public, mostly were still breakable either through the use of fast computing systems, or through some ingeneous tricks.

Then last year I thought that if the inventors of encryption systems can never be sure or can never solidize their systems, then perhaps those systems should be encrypted as well.

What I mean here is that, we can perhaps create an encryption system whereby the system is never constant or static or whereas the system on how the encryption will proceed will depend on another variable like, for example, the password or the encryption key itself.

To make things simpler, we can do something like generating a list of encryption methods from a password or part of the password or a seed based from the password encoded in special algorithms and using the methods in the list to encrypt a target plain text. After encryption, we can then decrypt it by using the reverse form of the list. A different password will only form a different sequence of methods and should unlikely decrypt the encrypted text.

An obvious difficulty in cracking should be noticed here. Cracking programs will be difficult to optimize and could probably take an incredibly long time to finish a single crack. We should know that in order to make a successful crack, cracking programs should successfully guess the sequence first then sucessfully find the password using the methods in the sequences as well.

Note that enhancements can also be added to the encryption like forwarding a seed to the next sets of plain text from the whole or part of an encryption result either after the last method or on a random part of the methods. These enhancements should also be carefully examined though as they sometimes just make false enhancements and make the system weaker.

Generally, with random methods, probability of finding the key would nearly reach 0 at least with a strong implementation. Also, with this concept, boundaries that are limited by specific encryptions can be broken; a new implementation can be easily remade from another working implementation by adding new methods and/or just changing the parameters like the minimum and maximum limit of the list.

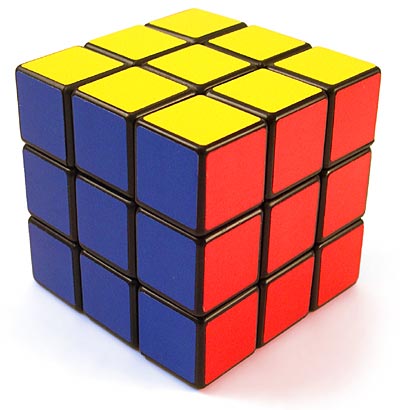

Out of this idea, there are many possible implementations we can create and one of those would be basing an encryption system on an object like a cube. Here we process every part of the plain text by putting it in an imaginary space like the cube and then we manipulate the cube based from the generated list of methods that was created from the password. In the methods, we can do many things including both the things we can do on a rubik’s cube and also the things we can do on other encryption systems that applies on things based from a password like the ones in AES.

By these mechanisms and the randomization of methods, we can tell that an implementation on a cube should already be strong enough but how ‘bout if we also apply it on other figures like the figure that has eight sides instead of just four (I don’t know what it’s called) and the other polyhedrons?

Also, how ‘bout if we make the figures polymorhpic that it can convert from one figure to another and vice versa?

Obvious pros regarding this concept:

- Far less predictable

- Far less crackable

- Very flexible

- Could be a candidate of a good encryption system that could protect data against new superfast computing systems

Obvious cons:

- Not easy or less easier to implement especially in embedded systems

- The stronger and the more random the implementation, the slower and the more difficult to stabilize and/or optimize

- Very matrical and mind squeezing especially when making the code extremely solid and consistent